Recent posts#

2025-10-30 Action-driven processes for continuous-time control

At the heart of reinforcement learning are actions – decisions made in response to observations of the environment. Actions are equally fundamental in the modeling of stochastic processes, as they trigger discontinuous state transitions and enable the flow of information through large, complex systems. In this paper, we unify the perspectives of stochastic processes and reinforcement learning through action-driven processes, and illustrate their application to spiking neural networks. Leveraging ideas from control-as-inference, we show that minimizing the Kullback-Leibler divergence between a policy-driven true distribution and a reward-driven model distribution for a suitably defined action-driven process is equivalent to maximum entropy reinforcement learning.

2025-09-26 A benchmark for vericoding: formally verified program synthesis

We present and test the largest benchmark for vericoding, LLM-generation of formally verified code from formal specifications - in contrast to vibe coding, which generates potentially buggy code from a natural language description. Our benchmark contains 12,504 formal specifications, with 3,029 in Dafny, 2,334 in Verus/Rust and 7,141 in Lean. Of these, 6,174 are new unseen problems. We find vericoding success rates of 27% in Lean, 44% in Verus/Rust and 82% in Dafny using off-the-shelf LLMs. Adding natural-language descriptions does not significantly improve performance. We also find that LLM progress has improved progress on pure Dafny verification from 68% to 96% over the past year. The benchmark and vericoding results are shared at this https URL.

2025-05-30 Refine yourself a code for great good!

How do you code? Andrej Karpathy who coined the term “vibe coding,” says that for professional coding tasks, he picks single concrete incremental changes and, with AI assistance, plans them, executes them, and evaluates them. Refinement is the process of making incremental changes. It is not just the primary way we design and construct code, but also how we repair them and upgrade them, often collaboratively with other coders, designers and users.

2025-01-10 Relative information and the dual numbers

Relative information (Kullback-Leibler divergence) is a key concept in statistics, machine learning and information theory. In fact, singular learning theory tells us that the generalization error of a learning algorithm depends on the structure of algebraic geometric singularities of relative information. This leads us to wonder, what properties of relative information contribute to its ubiquity? In this talk, we will study fundamental characteristics of conditional relative information I_{q||p}(Y|X) where q, p are joint distributions on random variables X, Y. We define the rig category C of random variables and their conditional maps, as well as the rig category R(e) of dual numbers. Relative information can then be constructed, up to a scalar multiple, via rig monoidal functors from C to R(e). Closely related to this construction is the information cohomology of Baudot, Bennequin and Vigneaux.

2024-10-16 Singular learning, relative information and the dual numbers

Relative information (Kullback-Leibler divergence) is a fundamental concept in statistics, machine learning and information theory. In the first half of the talk, I will define conditional relative information, list its axiomatic properties, and describe how it is used in machine learning. For example, according to Sumio Watanabe’s Singular Learning Theory, the generalization error of a learning algorithm depends on the structure of algebraic geometric singularities of relative information. In the second half of the talk, I will define the rig category Info of random variables and their conditional maps, as well as the rig category R(e) of dual numbers. Relative information can then be constructed, up to a scalar multiple, via rig monoidal functors from Info to R(e). If time permits, I may discuss how this construction relates to the information cohomology of Baudot, Bennequin and Vigneaux, and to the operad derivations of Bradley.

2024-10-01 Program Synthesis

A list of prior work I did with my collaborators and Ph.D. students on dependent type theory and program synthesis: Program Synthesis.

2024-09-24 Safety by shared synthesis

Today, critical infrastructure is vulnerable to both malicious attacks and unintended failures, and these risks are expected to grow in the foreseeable future. Deploying formal verification (FV) across critical cyber physical systems would dramatically improve safety and security, but has historically been too costly to use outside the simplest or most critical subsystems. AI could allow widespread use of FV in years not decades, shifting cyber risks strongly in favor of defense. In this talk, I will outline our report with Atlas Computing on AI-enabled tools for scaling formal verification (https://atlascomputing.org/ai-assisted-fv-toolchain.pdf). I will also discuss some lessons that I learnt along the way, especially about shared synthesis - the collaborative construction of formal specifications, implementations and proofs.

-

Atlas and Topos has been working on a roadmap for AI-assisted code specification and synthesis. The thesis is that formal verification (FV) is our best bet for protecting our sociotechnical systems, especially from human-led and/or AI-enabled attacks. Formal AI-assisted coding could make FV widespread and turn the tide in favor of defense. The roadmap describes concrete projects towards that goal. In this talk, I will discuss various components of the roadmap and show simple demos of what language models can do out of the box. I will also dive into correct-by-construction code synthesis, and how that it preferable to token-by-token code generation.

2024-05-08 AI-assisted coding: correct by construction, not by generation

Atlas and Topos has been working on a roadmap for AI-assisted code specification and synthesis. The thesis is that formal verification (FV) is our best bet for protecting our sociotechnical systems, especially from human-led and/or AI-enabled attacks. Formal AI-assisted coding could make FV widespread and turn the tide in favor of defense. The roadmap describes concrete projects towards that goal. In this talk, I will discuss various components of the roadmap and show simple demos of what language models can do out of the box. I will also dive into correct-by-construction code synthesis, and how that it preferable to token-by-token code generation.

2023-12-11 Online learning for spiking neural networks with relative information rate

Spiking neural networks (SNNs) mimic biological neural networks more closely than feedforward neural networks (FNNs), and are pursued because of the promise of low-energy training and inference. While learning methods such as Hebbian algorithms and STDP (spiking-timing-dependent plasticity) exist, the learning theory of SNNs is poorly understood. In particular, little is known about how SNNs with memory (i.e. latent or hidden variables) can be trained effectively, making it difficult to build large SNNs that rival the performance of large FNNs. In this talk, we attack this problem with the information theory of time series. Using relative information rate, Amari’s em algorithm and stochastic approximation theory, we derive online learning algorithms for SNNs with memory. It turns out that STDP is a consequence of this algorithm, rather than its basis. This is joint work with Tenzin Chan, Chris Hillar and Sarah Marzen.

2023-10-25 Relative Information and the Dual Numbers

Relative information (Kullback-Leibler divergence) is a fundamental concept in statistics, machine learning and information theory.

2023-08-20 References on information cohomology

Some references on the cohomological nature of various information theoretic concepts such as entropy and relative information.

2023-06-26 All you need is relative information

SLT has taught me that relative information (or Kullback-Leibler divergence) is all you need. For instance, the level sets of relative information give us state density functions, whose Fourier, Laplace and Mellin transforms reveal different facets of learning algorithms and their ability to generalize. In this talk, I will outline two ongoing projects involving relative information. The first project explores the information theory of time series, for the purpose of understanding language models and reinforcement learning. Using relative information rate, we derive stochastic learning algorithms for spiking neural networks with memory. The second project explores cohomological generalizations of relative information, building on recent work by Pierre Baudot and Daniel Bennequin and by Tai-Danae Bradley. The hope is to uncover new tools for studying non-statistical spaces, by analyzing the level sets of generalized relative information and their transforms.

2023-04-01 Relative information is motivic

A (Hochschild) cohomological view of relative information must be motivic!

2022-05-28 Likelihood, greed and temperature in sequence learning

Imagine we have a model \(D(w)\) of a dynamical system with states \(s \in S,\) that is parametrized by some weight \(w \in W\). Each state \(s\) comes with a set \(N(s) \subset S\) of neighbors and an associated energy function \(E(s'|s,w) \in \mathbb{R}\) that assigns an energy to each neighbor \(s' \in N(s)\).

2022-01-22 Parametric typeclasses aid generalization in program synthesis

We envision programming being done in top-down fashion. The human describes the goal (e.g. sorting), and the machine reduces it to smaller subgoals based on well-known heuristics (e.g. divide and conquer). The easier subgoals could even be fulfilled automatically. This top-down heuristics approach will be more amenable to machine learning. See my Topos Institute talk for more info.

2022-01-22 Information topos theory - motivation

Relative information (also known as the Kullback-Leibler divergence) is an important fundamental concept in statistical learning and information theory.

2021-09-09 All you need is relative information

Relative information (relative entropy, KL divergence) and variational inference are powerful tools for deriving learning algorithms and their asymptotic properties, for both static systems and dynamic systems. The goal of this talk is to motivate a general online stochastic learning algorithm for stochastic processes with latent variables or memory, that provably converges under some regularity conditions. Please visit https://bit.ly/3kmovql for details.

2021-06-05 Spiking neural networks

In this post, we study a class of spiking network models based on continuous-time Markov chains with mutable variables.

2021-06-01 Convergence of biased stochastic approximation

Using techniques from biased stochastic approximation [KMMW19], we prove under some regularity conditions the convergence of the online learning algorithm proposed previously for mutable Markov processes.

2021-05-10 Path integrals and the Dyson formula

One of the deepest results in quantum field theory, to me, is the Dyson formula [nLa]. It describes the solution to the differential equation

2021-04-22 Proofs as programs - challenges and strategies for program synthesis

The Curry-Howard correspondence between proofs and programs suggests that we can exploit proof assistants for writing software. I will discuss the challenges behind a naïve execution of this idea, and some preliminary strategies for overcoming them. As an example, we will organize higher-order information in knowledge graphs using dependent type theory, and automate the answering of queries using a proof assistant. In another example, we will explore how decentralized proof assistants can enable mathematicians or programmers to work collaboratively on a theorem or application. If time permits, I will outline connections to canonical structures, reflection (ssreflect), transport, unification and universe management.

2021-03-23 Biased stochastic approximation with mutable processes

The goal of this post is to derive a general online learning recipe for training a mutable process \(\{Z_t,X_t\}\) to learn the true distribution \(Q_*(X)\) of a partially-observed Markov process \(\{X_t\}\). The recipe returns a generative distribution \(P(Z,X)\) whose marginal \(P(X)\) approximates \(Q_*(X).\)

2021-03-22 Relative inference with mutable processes

We introduce a information-theoretic objective, which is a form of relative information between a discriminative model and a generative model, for learning processes using models with mutable variables. This technique is known as relative inference (also called approximate inference, variational inference or variational Bayes). Such a technique is useful, for instance, for learning processes that contain latent variables.

2021-03-21 Process learning with relative information

Over the next few posts, we will derive a distributed learning algorithm for spiking neural networks with mutable variables that minimizes some natural notion of relative information and provably converges over time. We will model these spiking neural networks with stochastic processes: both discrete-time and continuous-time processes, with or without mutable variables.

2020-12-01 Biased stochastic approximation

We explore the convergence of continuous-time ordinary differential equations and their discrete-time analogs, such as stochastic approximation and gradient descent, through the lens of Lyapunov theory [B+98] [LR15]. From this perspective, we will study biased stochastic approximation [KMMW19] where the expectation of the stochastic updates conditioned on the past (which we call the conditional expectation) is not the same as the expectation of the stochastic updates under the stationary distribution (which we call the total expectation).

2020-10-23 Machine learning with relative information

We will reframe some common machine learning paradigms, such as maximum likelihood, stochastic gradients, stochastic approximation and variational inference, in terms of relative information.

2020-10-14 Path integrals and continuous-time Markov chains

We give an introduction to continuous-time Markov chains, and define path measures for these objects.

2020-10-07 Motivic relative information

So far, our definition of relative information studies the divergence between real-valued measures. In this post, we will explore motivic measures which take values more generally in some ring \(R\), and have some fun applying motivic relative information to zeta functions.

2020-10-05 Zeta functions, Mellin transforms and the Gelfand-Leray form

We outline the similarities between zeta functions appearing in number theory and in statistical learning.

2020-09-18 Conditional relative information and its axiomatizations

In this post, we will study the conditional form of relative information. We will also look at how conditional relative information can be axiomatized and extended to non-real-valued measures.

2020-09-08 Building foundations of information theory on relative information

The relative information [BF14] (also known as relative entropy or Kullback-Leibler divergence) is an important object in information theory for measuring how far a probability measure \(Q\) is from another probability measure \(P.\) Here, \(Q\) is usually the true distribution of some real phenomenon, and \(P\) is some model distribution.

2020-08-28 Motivic information, path integrals and spiking networks

I’m writing a series of posts that will explore the connections between these topics. Here is a rough outline of the series, which I will fill in slowly over time.

2020-08-07 Processes and variety maximization

“It’s a theory about processes, about the sequences and causal relations among things that happen, not the inherent properties of things that are. The fundamental ingredient is what we call an “event.” Events are things that happen at a single place and time; at each event there’s some momentum, energy, charge or other various physical quantity that’s measurable. The event has relations with the rest of the universe, and that set of relations constitutes its “view” of the universe. Rather than describing an isolated system in terms of things that are measured from the outside, we’re taking the universe as constituted of relations among events. The idea is to try to reformulate physics in terms of these views from the inside, what it looks like from inside the universe.”

2020-07-23 Adjunctions

Read about the Curry-Howard-Lambek correspondence. Some call it the holy trinity of Logic, Computation and Categories. Lambek adds the “objects as propositions” and “arrows as proofs” part to the mix. You may need to learn some basic category theory.

2020-05-26 Directed spaces and types

Joint work with Jin Xing Lim, Liang Ze Wong, Georgios Piliouras.

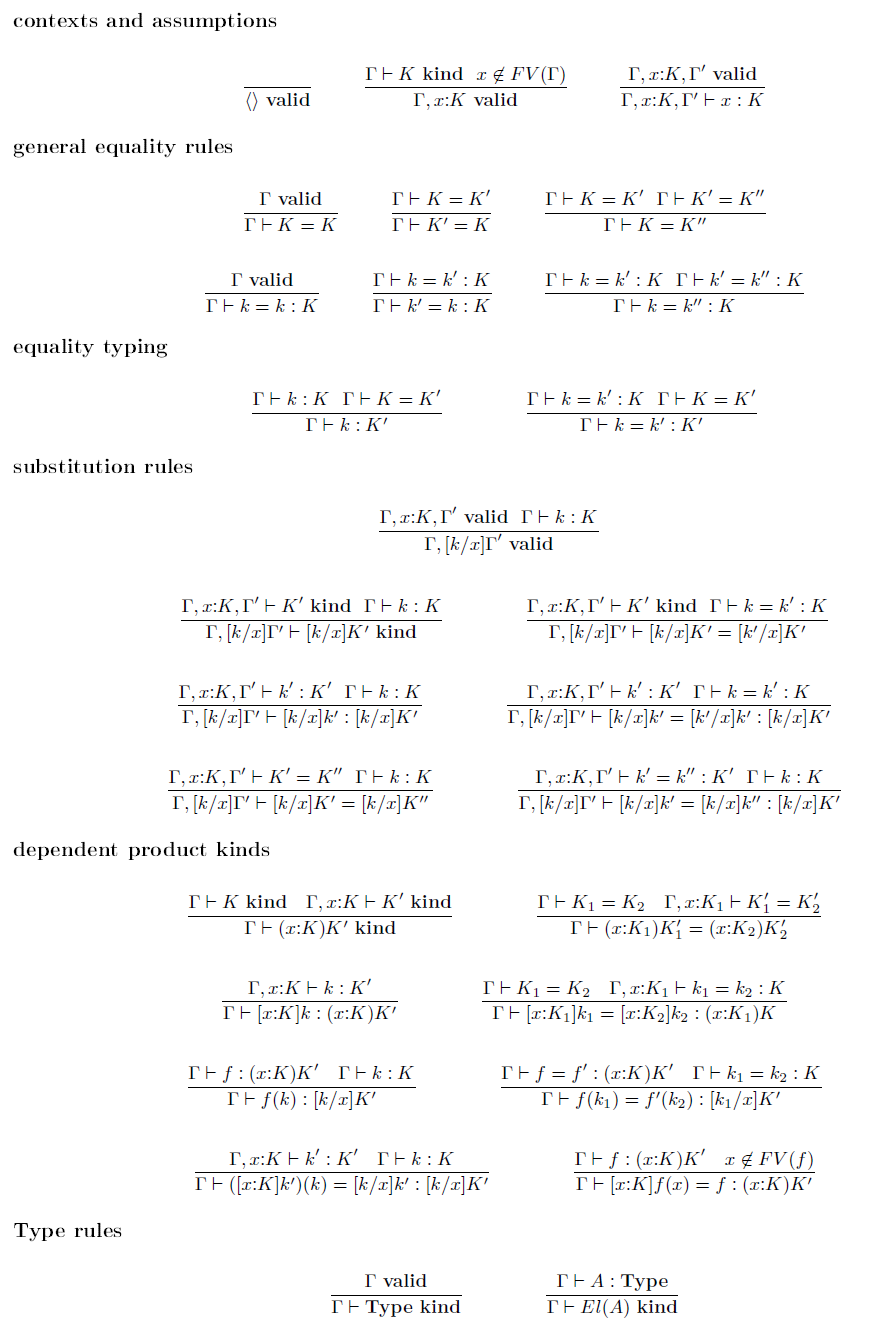

2020-05-21 Logical frameworks

Logical frameworks are formal meta-languages for specifying different kinds of object theories (e.g. logical theories and type theories).

2018-05-26 Machine reasoning and deep spiking networks

This is a short presentation which I shared at the Visions of AI Futures workshop organized by AI Singapore. I talked about the goal of building next-generation machine intelligence, through neural and symbolic modules that work seamlessly together to accomplish intuitive reasoning, as well as efficient, effective neural chips for every device.

2017-05-08 Artificial general intelligence for the internet of things

What do we need to achieve artificial general intelligence? How do we distribute intelligence over the internet-of-things? We’ll dive deep into the heart of the matter, which is machine reasoning. Following recent advances in mathematical foundations and homotopy type theory, we conclude that the crux is to formally separate intents from implementations. We can teach neural networks to understand these intents and to use a divide-and-conquer method for compiling these intents into implementations. Our goal is to outline a distributed strategy for accomplishing this moonshot.

2016-05-03 Exercise on sparse autoencoders

This exercise is the first of several posts I am writing, for those who want a mathematical and hands-on introduction to deep neural networks.

2016-05-03 Exercise on deep neural networks

Read the notes and complete the exercises for the section on “Building Deep Networks for Classification” in the UFLDL Tutorial. Complete all the programming tasks in Python. No starter code will be given for these exercises, but you may refer to the given Matlab codes for hints if you are stuck

2016-02-04 Hashing

Hashing is a method for compressing information from a high dimensional space into a smaller space. Hashing is commonly used in computer science to help us with many tasks. For instance, if two documents are (randomly) hashed to the same code, it is very likely that they are exactly the same. Also, in computer vision, we sometimes hash images in a clever way to find similar or related images through their codes.

2014-08-13 Statistics and machine learning

Below are some introductory texts for probability, statistics, machine learning and statistical learning theory. They are sorted roughly according to difficulty, so you can find a book that is suitable for where you are and what you need.

2014-08-02 Boltzmann machines and hierarchical models

The restricted Boltzmann machine (RBM) is a key statistical model used in deep learning. They are special form of Boltzmann machines where the underlying graph is a bipartite graph. Personally, I am more interested in Boltzmann machines because they represent a class of discrete energy models where the energy is quadratic. The dynamics of the model bears a lot of resemblance to those of Hopfield networks and Ising models. As an aside, normal distributions are continuous energy models where the energy is quadratic and positive definite.

2012-07-13 Studying model asymptotics with singular learning theory

Singular statistical models occur frequently in machine learning and computational biology. An important prob-lem in the learning theory of singular models is determin-ing their asymptotic behavior for massive data sets. In this talk, we give a brief introduction to Sumio Watanabe’s Singular Learning Theory, as outlined in his book “Algebraic Geometry and Statistical Learning Theory.” We will also explore the rich algebraic geometry and combinatorics that arise from studying the asymptotics of Bayesian integrals.